6 Conclusion

This chapter first clarifies how the research question and objectives (Section 1.4) were addressed. It then outlines my contribution to knowledge and proceeds to offer recommendations to developers of future BCMI systems. After that, I will finish by briefly introducing new research goals that go beyond the scope of this thesis.

Table of contents

Research Question and Objectives

To answer the research question, ‘How can an affordable and open-source BCMI system be created to support meditation practices in NFT and artistic performance settings?’, I addressed all of my ROs:

RO1: The literature review identified EEG correlates (neuromarkers) of meditative states and specific methods that can help induce and maintain these states (Sections 3.2, 3.3, 3.4 and 3.5).

RO2: The research developed the affordable and open-source BCMI-2 system based on the literature review findings (Sections 5.1 and 5.2).

RO3: The research tested BCMI-2’s suitability to support meditation practices in NFT and artistic performance settings (Sections 5.3 and 5.4).

RO4: Based on the knowledge gained, I provide recommendations for researchers who are interested in using BCMI-2 or developing new BCMI systems to support meditation practices in NFT and artistic performance settings.

Contribution to Knowledge

By addressing these objectives, my research contributions are

- the creation of the BCMI-2 system and

- the recommendations based on the knowledge gained while developing and testing its suitability to support meditation practices in NFT and artistic performance settings.

BCMI-2 system

BCMI-2 is a stable system combining two entrainment methods in a novel way to support meditation practices: neurofeedback protocols and ARE. It embeds the neurofeedback reward sound as an integral element within the ARE, creating interactive and engaging soundscapes. BCMI-2 uses an affordable research-grade OpenBCI board to measure, digitalise and amplify multi-channel EEG, combined with the free audio programming environment SuperCollider for the remaining interfacing steps. BCMI-2 is fully open-source and, from the acquisition step onwards, customisable within one programming environment (SuperCollider). Clarity is provided by removing the need to run multiple software applications or IDEs simultaneously (e.g. one to process EEG and another to generate music). Finally, for those interested in developing new BCMI systems based on BCMI-2, SuperCollider offers high-quality audio and versatile composition tools and a vibrant research community available for help if needed.

As we can practise various meditative states with different musical methods, BCMI-2’s customisable steps for electrode location, feature extraction, classification, mapping and sound control parameters could be valuable for:

- NFT practitioners who wish to design immersive soundscapes for neurofeedback protocols

- artists who wish to express themselves with physiological computing

- meditation practitioners who wish to understand meditation from a scientific perspective

Users with programming skills can customise BCMI-2’s neurofeedback protocols and ARE parameters. We can select up to eight channels to record raw EEG signals and extract multiple frequency bands and phase coherence features from these signals. We can classify these features and then map them to sound control parameters for NFT or other, more artistic sonification purposes. Furthermore, we can adjust the tempo and rhythmic variability of the ARE generator and replace the default frame drum samples with different ones. Also, BCMI-2 can spatialise sound in stereo or 4.0 surround. Although this research only tested the system’s ability to induce and maintain the SSC, by adjusting these parameters, we can entrain other meditative states (e.g. related to strong alpha brainwaves or hemispheric coherence).

We can use BCMI-2 in NFT and artistic performance settings

- with the same setup as documented in Sections 5.4 and 5.5 to induce and maintain the SSC 1 or

- by customising the system (e.g. the neurofeedback protocol and ARE parameters, the soundscape’s sounds and length) to help induce and maintain other meditative states.

As both of these options require some programming skills, ultimately, BCMI-2 is still a prototype system for use by technically skilled people.2

In May 2021, I discussed this proof-of-concept system with NFT experts, whose positive feedback included the following:

Feedback regarding the high-quality audio and customisability of BCMI-2:

It’s exciting to see that Krisztian’s research focuses on high-quality audio that’s highly customized to create cutting-edge bio/neurofeedback experiences. I’m intrigued by the potential of a system that generates music in real-time intending to train and entrain certain mental states by harnessing individually generated music. We’re delighted to support this scientific, beautiful, and artistic approach to edutainment, and its potential to enhance the human experience of millions. ~ Patrick Hilsbos, CEO @ Neuromore — neuromore.com

Feedback regarding the aesthetics of auditory NFT and the importance of aligning aspects of the feedback with aspects of the desired mental shift:

I agree with your perspective that the quality and pleasantness of the sounds are crucial for meditation or induction of inwardly directed attention. After all, everything affects the brain, not just contingent neural feedback, but any kind of stimulus, so we want to make sure the stimuli we use are aligned with our purpose of inducing meditative mental states. Your research project sounds very interesting - I’m sure it would be beneficial to many! ~ Revital Yonah, EEG technician @ BetterFly — btr-fly.com

Feedback regarding the importance of researching the effective use of sound in NFT:

From our conversation, I appreciate your understanding of how audio can play a critical role in the feedback process. It is an area that providers and softwares fail to place an appropriate degree of importance on in a lot of cases. I’m hoping that auditory feedback can see more development and research to show its benefits when compared to modalities that rely solely on visual feedback or simple discrete auditory feedback. ~ Cole Preuett, @ EEGStore — eegstore.com

Feedback regarding the importance of BCMI-2’s personalisable sound control parameters:

The developed system offers great flexibility in the creation of neurofeedback applications with immediate reaction to minor changes in the underlying psychophysical processes. This can be advantageous for various implementations of neurofeedback protocols. It would be great to see further dissemination of the results, maybe via an open knowledge base for sharing NFB-training protocols and user experiences. ~ Chris Veigl, Developer @ Brainbay — brainbay.nl

Since the whole idea of biofeedback/neurofeedback is direct and specific feedback and it is based on operant conditioning, it is quite important that the feedback (either audio or visual) is in line with that. That is the reason we also offer so many different types of feedback, certain feedback simply work better with one person than with another. Hope that helps Krisztian. ~ Representative @ Mind Media BV — mindmedia.com

Recommendations

To help future BCMI developers support meditation practices in NFT and artistic performance settings, based on the knowledge gained in this research, I recommend first

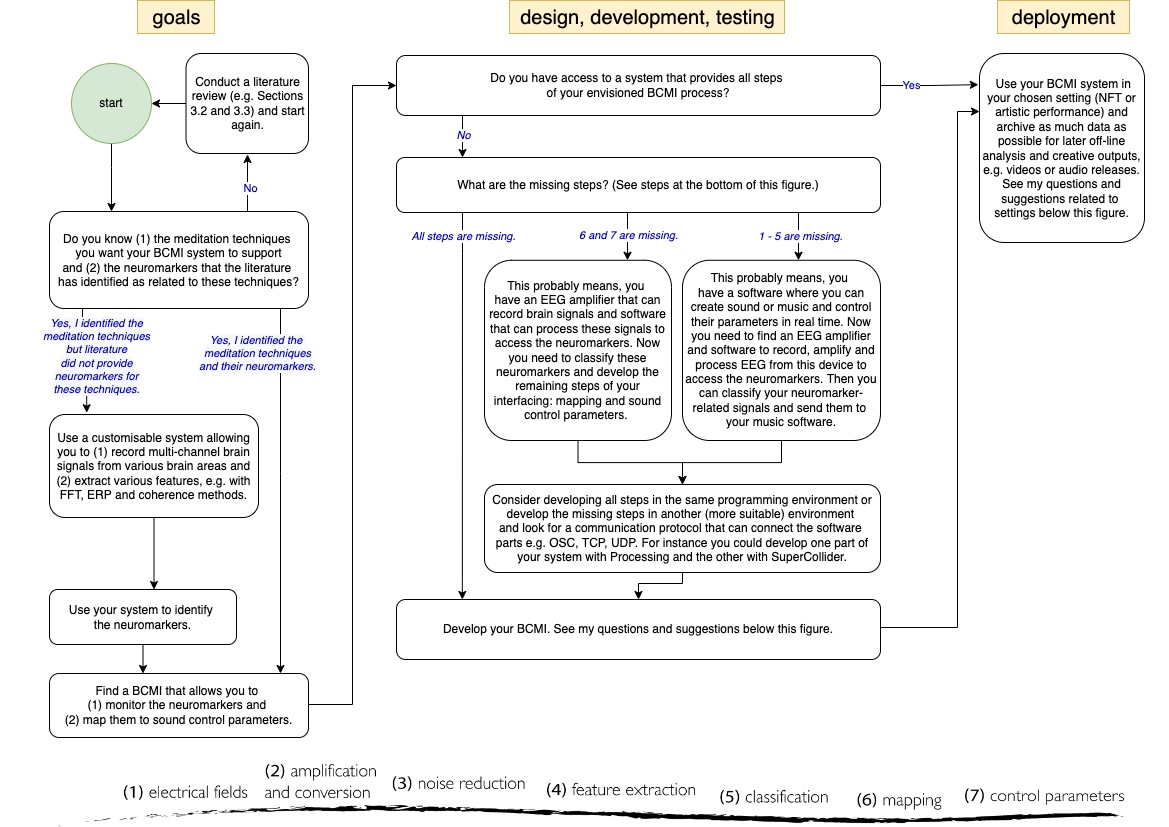

- consulting the flowchart in [@fig:bcmi-devflowchart] to identify the specific meditation techniques, their neuromarkers, and the missing steps of the envisioned BCMI and then

- considering a list of questions and suggestions below to help design the system. This list is first organised in the order of the BCMI steps and then thematically. To indicate the significant number of potential hardware and software combinations, many of my questions are open-ended.

Steps (1) electrical fields and (2) amplification and conversion

- Have you reviewed the available BCI systems for your BCMI? Some EEG amplifiers might come with software you can implement in your steps (see Section 3.1 for a general introduction and references to publications comparing systems).

- Do you (or your institution) have suitable EEG hardware you can experiment with, or will you have to purchase this hardware?

- Do you need your system to acquire EEG from multiple amplifiers or only one?

Step (3) noise reduction

- What options do you have for noise reduction and impedance measurement (e.g. a standalone application or SDKs provided by the developer of your EEG amplifier or libraries/classes native to considered IDEs)? What are the advantages and disadvantages of these options?

- Have you explored machine-learning options for noise reduction?

Steps (4) feature extraction and (5) classification

- What options do you have for feature extraction (e.g. a standalone application or SDKs provided by the developer of your EEG amplifier or libraries/classes native to considered IDEs)? What are the advantages and disadvantages of these options?

- If you can access signals classified by proprietary (not open-source) algorithms, are you planning to use them?

- Have you explored the literature on feature extraction methods used in BCI (Section 3.1), NFT (Section 3.2 and 3.3) and artistic performance settings (Section 3.6)?

- Can your software application extracting the neuromaker-related features classify them and make them available for mapping? For instance,

- can you classify your features as (programming) variables?

- can you use a communication protocol (e.g. OSC, TCP, UDP) to send these signals to another software program?

Steps (6) mapping and (7) sound control parameters

- Do you know the setting in which you will use your system (e.g. NFT, artistic performance or both)?

- Is accuracy or aesthetics more important for your work, or are both required?

- Have you considered neurofeedback protocols

- including or excluding sound with short or continuous feedback (Section 3.2)?

- providing immediate or accumulative feedback (Chapter 4)?

- Have you considered employing gaming elements to increase your users’ engagement (Sections 3.5.2 and 4)?

- Have you considered using spatial audio to increase the immersion of your audience (Section 3.5.2)?

- Have you reviewed the different approaches used for mapping data to sound (e.g. low- and high-level sonifications) (Section 3.7)?

- Have you explored entrainment methods that can help support your chosen meditation practice (e.g. auditory, visual entrainment, haptic or their multimodal combination) (Sections 3.2, 3.3 and 3.4)?

- If you use neurofeedback protocols, will you adjust their thresholds manually, or will your software adjust them automatically (Dhindsa et al., 2018)?

NFT and artistic performance settings

- Have you considered how users with different levels of meditation experience will interact with your system? For instance, will the music it creates be engaging — for the user or an audience — if the user cannot successfully induce and maintain a meditative state?

- Can audience members test your system at your event or perhaps be part of your performance?

- Do you plan to visualise your brain signals as well?

- Have you considered how much detail of the interfacing process you should explain to your users or audience? My advice for artistic performances is to be as transparent as possible about the interfacing process, and ask the audience to choose between engaging with the performance intuitively or analytically. For instance, I asked my audience to either meditate with me with their eyes closed or monitor how my EEG’s sonification correlated with its visualisation on the screen behind me.

- Have you considered the need for assistance? While you probably do not need an additional person to conduct NFT sessions, performance settings can be much more demanding. Therefore, I advise organising support not only for setting up the technical gear but also for performing soundchecks.

- In a performance setting, do you plan to set up an entirely computer-controlled system, or will certain parts of the mapping be adjusted by a performer (e.g. the volume or spatialisation of a sonified EEG)? Do you plan to discuss the advantages and disadvantages of these two approaches to aesthetics with your audience?

Engaging with academic and public audiences can be a two-way process of giving and receiving information. During this research, I used my BCMI systems, or their parts, in artistic performances, demonstrations, workshops and other collaborative projects. I also presented my research without these systems at symposiums, conferences and other small events. People at these events gave me valuable feedback on my work, and some asked to be put on my mailing list so they could be informed about upcoming studies to participate in. These events also led to collaborative projects, enhanced my academic profile and developed my public speaking and performing skills. Therefore, I recommend that BCMI developers seek regular opportunities to disseminate their progress.

Active, passive or mutually-oriented BCMI

- What options do you have for active and passive BCI (e.g. a standalone application or SDKs provided by the developer of your EEG amplifier or libraries/classes native to considered IDEs)? What are the advantages and disadvantages of these options? For example, hard systems might be easier to develop than soft or hybrid ones, as the last two might require a good understanding of machine-learning.

- Is active, passive, or a mutually-oriented BCMI more suitable for your chosen meditation technique? Would a more conscious or spontaneous form of operant conditioning be more effective? For instance, in concentrative meditation, where users could focus consciously on one sound of a soundscape instead of the overall ambience, a hard BCI that relies on conscious operant conditioning might be more useful. However, as it is ultimately the user who decides how to pay attention and to what, this is an exciting inquiry. Krol, Andreessen and Zander (2018) discuss this issue as follows:

Care should be taken when presuming that mental states can be readily categorized as ‘spontaneous’ versus ‘voluntary’, as required by these definitions of BCI systems. Similarly, the activity ultimately used by any BCI system may not precisely fit one such category. A user who is aware of a passive BCI system might be influenced by the expectations they have of that system and voluntarily commit attentional resources to make sure that the ‘spontaneous’ activity takes place. A user might also attempt to consciously modulate this activity if results are not as expected. The other way around, an active BCI might rely on, or inadvertently make use of, brain activity that is not fully voluntarily controlled by the user. Meditation, as an example, seems to present an ambiguous mixture of both: it is the voluntary attempt to induce a state that is usually only achieved when contextual factors align. … the categorisation of a BCI system as (re)active or passive ultimately depends on the individual user and not on the system itself.

- Are you developing a system primarily for yourself or for others, and if for others, what technical skills are required to operate the system?

- Have you considered the scalability of your system? For instance, if you are building a minimum viable product – a proof-of-concept tool, as I did in this research – will it have a stable framework with clear code for you (or others) to expand on?

- Have you considered ethics-related issues when recording others’ EEG signals (e.g. will you ensure that participants’ data is stored safely)?

Technical and artistic skills

At the start of a BCMI project, you might have either primarily artistic or primarily technical skills. However, developing a BCMI requires both, so I advise finding resources that can help further develop the skills you lack. For instance:

- What expertise in music, BCI, or BCMI can your co-researchers, collaborators, and supervisors contribute to complement your own skillset?

- How can you develop relationships with individual researchers or research communities that have mastered these skills? For instance:

- Do you know the key researchers in the field of BCMI? (see Section 3.6)

- Do you have the writing skills to summarise your issue clearly and succinctly via direct email or internet forums?

- Are you aware of relevant events where you can demonstrate and discuss your progress (e.g. NIME, ICAD, eNTERFACE and NFT events)?

Terminology, APM, personal development and creative outputs

Due to the interdisciplinary nature of BCMI research, some of its terminologies are not always used consistently across the literature. In Section 3.6.4, I briefly discuss this issue, specifically in relation to the terms ‘sonification’, ‘musification’ and ‘control’. I also encountered it with other terms in various domains in my literature review. While experienced researchers of interdisciplinary studies are aware of this inconsistency and of the fact that definitions evolve over time, a novice researcher could become troubled by it (especially if English is not their first language). Therefore, I advise using or designing a note-taking system that can help you stay on top of this from the start of the research onwards.

For me, using APM as the overall research methodology was helpful. Therefore, I suggest considering it for other projects seeking to develop BCMI. Contrary to traditional waterfall-style management, in which the methods and literature review are established initially and not adjusted during the research, APM’s approach feels more natural. Its flexibility allowed overlapping projects to inform each other and emerging questions and methods to adapt to the course of the project’s development. However, as this flexibility could also be misused (e.g. by allowing too much time to address non-priority issues), I advise regular meetings with co-developers, stakeholders or supervisors to help monitor progress.

As we likely create meditation tools because we want to improve our own meditation practices, we can utilise the testing stages for our personal and spiritual development in actual mediation sessions.

I also advise considering the production of creative outputs during the process (e.g. audio releases or video documentation of performances) to help embody the richness of the research and expand its scope across and beyond disciplinary borders.

By considering these recommendations, I hope others can streamline their BCMI developments to support meditation in NFT and artistic performance settings. I am happy to provide further insights if needed.

New Goals

One of my new goals is to start addressing the new objectives of the BCMI-2 project (Chapter 5). Regarding the OpenBCI-SuperCollider Interface part of the BCMI-2 system (Section 5.2), first, I plan to (1) program an automatic neurofeedback threshold calculation to help adjust the difficulty levels of the operant conditioning in NFT sessions, (2) add a heart-rate monitor to provide more comprehensive biofeedback and more data for later analysis and to (3) investigate the potential use of the FEELTRACE instrument for recording users’ perceived emotions during sessions. Regarding the Shamanic Soundscape Generator part of the system (Section 5.3), first, I plan to (1) program customisable options for accumulative neurofeedback based on the BCMI-1 neurogame (Chapter 4), (2) add extra drum tracks to help experiment with polyrhythmic patterns and to (3) incorporate the use of the Ambisonic Toolkit as an alternative to my current surround spatialization method. Furthermore, I will invite researchers with advanced programming skills to contribute new feature extraction methods and sound control parameters to BCMI-2 via the projects’ GitHub repositories. Regarding the use of BCMI-2, my first goal is to carry on supporting my own meditation practice with the system to eventually be able to demonstrate a breakthrough into an ASC during a live artistic performance.3 The second goal is to conduct new NFT sessions with more participants in randomised, placebo-controlled, double-blind studies to help compare the effectiveness of different neurofeedback protocols and ARE parameters in inducing and maintaining different meditative states.

To attract the attention of academic and non-academic researchers for collaborations, I plan to build a website that hosts the system’s documentation with concise video tutorials and shares code, music and experiences with the community of interest. In line with De la Hera Conde-Pumpido’s De la Hera Conde-Pumpido’s (2017) recommendations linked to social aspects of persuasive games (Section 3.5.1), this website shall help guide and foster conversations to establish relationships between users and developers. To gain attention, I also plan to start a podcast where artists and researchers can discuss BCMI and organise a symposium at the University of Essex. Finally, I will continue discussing funding applications with academic researchers and relevant industries to gain financial support for these new goals.

-

Section 5.7 provides insights into how I meditate to induce and maintain the SSC while listening to the shamanic soundscapes generated by the system. ↩

-

Another way to use BCMI-2 is to take it apart and connect its parts to new software. For instance, we could develop new interactive soundscapes controlled by brain signals classified in the OpenBCI-SuperCollider Interface or develop new software to classify signals to be mapped via OSC to sound parameters in the Shamanic Soundscape Generator. ↩

-

The new neurofeedback protocol I have been developing for this artistic setting is based on Flor-Henry, Shapiro and Sombrun (2017)demonstrating neuromarkers of SSC. This protocol and other ongoing projects stemming from this research are outlined in Appendix 5. ↩